Running Keboola Connection backend in Docker

One of the recent challenging things I was working on was preparing an environment for running Keboola Connection (KBC) in Docker.

The application contains two main parts, KBC UI and PHP backend, which provides multiple APIs. This article is about the latter.

The aim was to speed up the development process and provide the backend as the “black box” with which other components we develop can communicate. For example, client libraries like Storage API client or Manage API client.

At the beginning, we defined requirements for the new environment:

- Easy to start up and set up (ideally one command to start the whole application and all linked services)

- Automated task running (specifically AWS SQS queues polling)

- Single point for logging with an easy way of watching (like Papertrail, but on localhost)

- Ability to run commands from CLI independently

These requirements came from the architecture of our production environment, but with one difference. There will be a separate service for each part of the system.

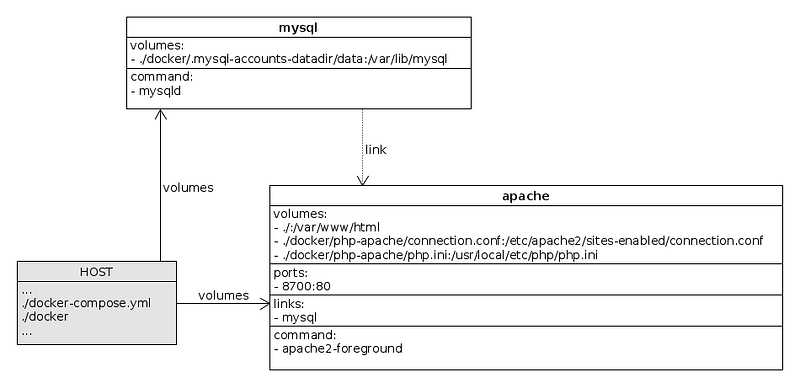

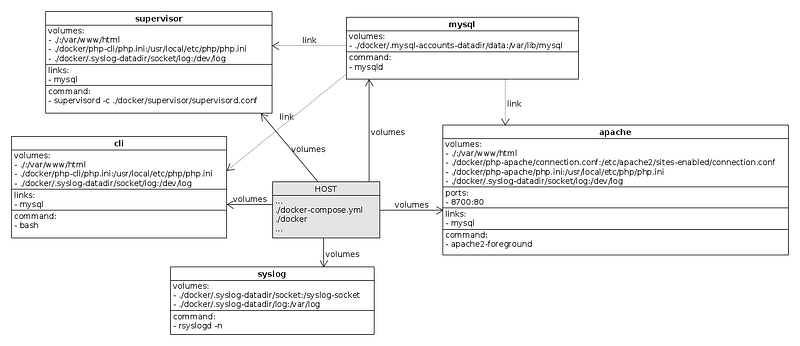

Basic architecture

The beginning was pretty straightforward. I chose to start with preparation of Apache and MySQL services. With the standard php:5.6-apache image and all code mounted through the volume to /var/www/html, the Apache service was almost identical with the base image.

To provide a changeable configuration, two other volumes have been mounted to the service:

/etc/apache2/sites-enabled/connection.conffor VirtualHost configuration- and

/usr/local/etc/php/php.inifor PHP configuration

With MySQL, it was even easier. The official MySQL image mysql:5.6 is in the repository, so there’s not much to speak about.

Don’t forget to mount your data directory to /var/lib/mysql to keep MySQL data stored on the host.

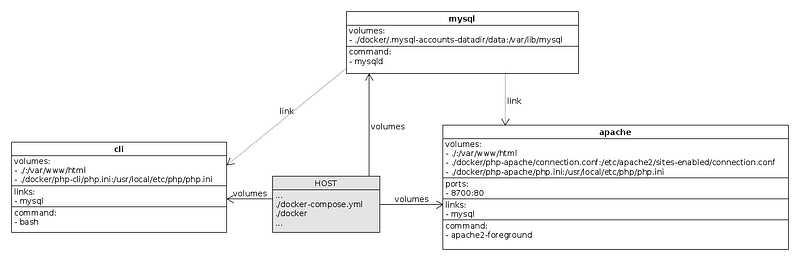

Separate PHP CLI service

For small applications, the same service for Apache and CLI is enough. Just start the web server in the background, and then use bash to run some PHP commands. This is handy, but mostly for a “one man show” development of a personal page or blog.

Each service should do one thing and only share data between each other.

So, I prepared another service running PHP based on the php:5.6 image and a similar configuration as in the Apache service (also sharing the same piece of code from the host).

This service is primarily for a single purpose commands execution, such as database initiation and migration, dependency installation (e.g. Composer) or cache cleaning.

Mount volumes with PHP code to the same place in all services to prevent future problems with paths.

This is how we’re running commands through the CLI service:

docker-compose run --rm cli composer install

docker-compose run --rm cli ./migrations.sh migrations:migrateAnother PHP service and Supervisor

We have small scripts which are polling queues. These scripts have to run all the time without interruption. Simply, we mustn’t stop processing tasks from the queues.

At first, I had the CRON service on my mind, but it wasn’t a good fit for the case. We have to make sure that the queue polling is running, and not execute the polling in intervals.

In the production environment, there’s a system service responsible for running processes polling the AWS SQS queues. That’s acceptable, but I wanted some interaction with the processes (kill them, restart them or easily see if they’re running).

I ended up with another service based on the php:5.6 image and small Dockerfile modifications to install Supervisor. Supervisor is a system built to handle processes and make sure they’re running.

Volumes are mounted the same way as in the previous CLI and Apache services, but with an additional volume for Supervisor’s configuration. So we can easily add more processes to Supervisor without modifying the image.

Snippet from Supervisor service configuration:

[program:storage-queue-receive]

directory=/var/www/html

command=./queues.sh storage:queue:receiveautorestart=true“One Syslog to rule them all”

Each service running PHP is configured to log its events to syslog, because we need a single point where to look for certain events or errors. But there’s no service running syslog in any of the previously mentioned services.

For those knowing Docker a bit, there’s an option to use the host system’s syslog. After setting this option, the container logs will be written to syslog on the host machine (or on a remote server).

But it wasn’t enough for us, since we wanted to see logs only from specific applications (not the entire host system’s syslog); specifically only from those which write to syslog and are defined in the docker-compose.yml file.

On the other hand, I didn’t want to mount my host’s syslog to other docker services.

Solving this took more time than expected, but the result is very promising. We used a separate service which is running syslog. This service is sharing syslog’s socket through the volume to other services, and those services are logging to this socket.

Every file, even a socket can be mounted as a volume to Docker services.

Logs from the Syslog service are also on the shared volume and there’s another service called Syslog-watcher responsible for dumping these logs with a simple tail command.

Summary

After all these steps, the following question arises. What is the “magic command” to run all these services?

Almost all services are linked together, so they will start with other services. For development purposes, only logs from the Apache (access logs) and Syslog-watcher services are important to us.

With that, the whole environment can be started with this short one-liner:

docker-compose up apache syslog-watcherTa-da!